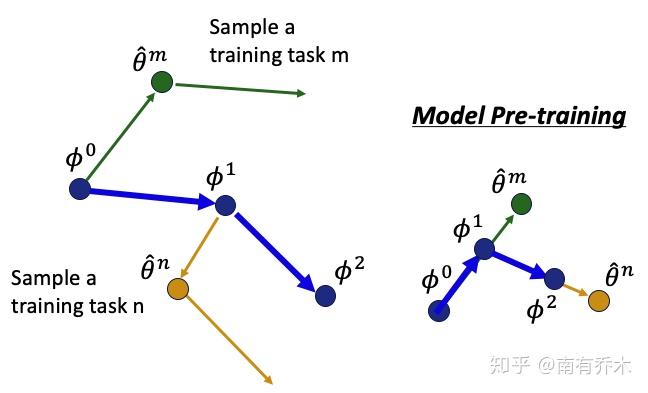

Instead of a separate search per task as in previous methods (DARTS Liu et al., 2019a), we propose meta-learning a task-aware architecture controller to help it generalize to new tasks when searching neural architectures, by learning to directly generate task-specific sublayer structures from the dataset. Meta-learning enables learning data-driven sublayers after pretraining, in a differentiable, task-adaptive manner ( Section 2.2). However, previous data-driven sublayer searches are often task-agnostic (e.g., So et al., 2021), where the sublayer search is implemented before pretraining. While the Transformer has proven to be a robust general-purpose architecture, recent work has shown that the optimal attention-then-FFN sublayer structure can vary across tasks (e.g., Sandwich Transformers Press et al., 2020). Though some work explores the benefits of joint training or fusion of parameter-efficient modules (Stickland and Murray, 2019 Lin et al., 2020 Pfeiffer et al., 2021), prior work has not explored meta-learning to learn these adaptations in a task distribution-aware setting. Notably, we modify MAML to incorporate these parameter-efficient modules, so that during task adaptation in both meta-training (the inner loop) and meta-testing (novel tasks) T i, we only adapt Φ → Φ i instead of Θ → Θ i, speeding up both phases and improving overall performance. Here, Φ is a small set of new parameters that are adapted into task-specific Φ i when finetuning. In contrast, we propose learning dynamic low-rank reparameterizations g Φ i ( Section 2.1) of the base model such that Θ i ( x ) = g Φ i ( Θ LM, x ) for task T i. Previous work uses very shallow CNNs (Finn et al., 2017), only adapt scale-and-shift parameters atop the original model (Sun et al., 2019), or apply various general regularization techniques such as weight decay, label smoothing, dropout, early stopping, and ℓ 1 regularization (Madotto et al., 2019 Song et al., 2020). ( 2020) argue that the impressive few-shot prompting ability of GPT-3 comes from “implicit” meta-learning (Schmidhuber, 1987 Bengio et al., 1990) which they term in-context learning, where the outer loop is performed by self-supervised pretraining, and the inner loop is performed by forward passes on implicit examples in unlabeled texts.Īdaptation data is typically limited, making it easy for large models to overfit. and found that by greatly improving the number and balance of tasks, one can utilize a multitask objective after pretraining and achieve gains in proportion to the number of tasks. ( 2020) showed that further pretraining on unlabeled text from the downstream task (task-adaptive pretraining, or TAPT) or a related domain (DAPT) consistently improved adaptation performance. ( 2020) found that multi-task learning underperformed pretrain-finetune for the largest T5 models on multi-format question answering. Earlier works suggested that task-awareness is unnecessary for PLMs of sufficient scale for example, Raffel et al. In general, self-supervised objectives used for PLMs assume little about the nature of downstream tasks. Ablations show our task-adaptive reparameterization (TARP) and model search (TAMS) components individually improve on other parameter-efficient transfer like adapters and structure-learning methods like learned sparsification. Experiments on few-shot dialogue completion, low-resource abstractive summarization, and multi-domain language modeling show improvements in adaptation time and performance over direct finetuning or preparation via domain-adaptive pretraining. This difference is expressed in terms of model weights and sublayer structure through our proposed dynamic low-rank reparameterization and learned architecture controller. Instead, we prepare PLMs for data- and parameter-efficient adaptation by learning to learn the difference between general and adapted PLMs. Finetuning requires modifying all of the parameters and having enough data to avoid overfitting while prompting requires no training and few examples but limits performance. Large pretrained language models (PLMs) are often domain- or task-adapted via finetuning or prompting.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed